2019-08-25

[585 Sun, 25 Aug 2019 07:59:41 -0700] Scheduler waiting for

Indexer to stop.

[584 Sun, 25 Aug 2019 07:59:36 -0700] Scheduler waiting for

Indexer to stop.

[583 Sun, 25 Aug 2019 07:59:31 -0700] Scheduler waiting for

Indexer to stop.

[582 Sun, 25 Aug 2019 07:59:26 -0700] Scheduler waiting for

Indexer to stop.

[581 Sun, 25 Aug 2019 07:59:21 -0700] Scheduler waiting for

Indexer to stop.

[580 Sun, 25 Aug 2019 07:59:16 -0700] Scheduler waiting for

Indexer to stop.

[585 Sun, 25 Aug 2019 07:59:41 -0700] Scheduler waiting for

Indexer to stop.

[584 Sun, 25 Aug 2019 07:59:36 -0700] Scheduler waiting for

Indexer to stop.

[583 Sun, 25 Aug 2019 07:59:31 -0700] Scheduler waiting for

Indexer to stop.

[582 Sun, 25 Aug 2019 07:59:26 -0700] Scheduler waiting for

Indexer to stop.

[581 Sun, 25 Aug 2019 07:59:21 -0700] Scheduler waiting for

Indexer to stop.

[580 Sun, 25 Aug 2019 07:59:16 -0700] Scheduler waiting for

Indexer to stop.

[485 Sun, 25 Aug 2019 08:00:18 -0700] No data. Sleeping...

[484 Sun, 25 Aug 2019 08:00:18 -0700] Percent idle time per

hour: 96.35%

[483 Sun, 25 Aug 2019 08:00:18 -0700] MAIN LOOP CASE 1 --

SWITCH CRAWL OR NO CURRENT CRAWL

[482 Sun, 25 Aug 2019 08:00:18 -0700] Crawl time has

changed!

[481 Sun, 25 Aug 2019 08:00:18 -0700] End Name Server Check

[480 Sun, 25 Aug 2019 08:00:18 -0700] Minimum fetch loop

time is: 5 seconds

[479 Sun, 25 Aug 2019 08:00:18 -0700] Crawl Time Changing to

0 -- No Crawl

[478 Sun, 25 Aug 2019 08:00:18 -0700] Start of response

was:a:6:{s:1:"a";i:1;s:1:"b";i:0;s:2:"dv";s:4484:"YTo3OntpOj

A7YTo3OntzOjI6IklEIjtzOjE6IjEiO3M6NDoiTkFNRSI7czo2OiJEUlVQQU

wiO3M6ODoiUFJJT1JJVFkiO3M6MToiMCI7czo5OiJTSUdOQVRVUkUiO3M6MT

I1OiIvaHRtbC9oZWFkLypbY29udGFpbnMoQGhyZWYsICcvc2l0ZXMvYWxsL3

RoZW1lcycpIG9yIGNvbn

[485 Sun, 25 Aug 2019 08:00:18 -0700] No data. Sleeping...

[484 Sun, 25 Aug 2019 08:00:18 -0700] Percent idle time per

hour: 96.35%

[483 Sun, 25 Aug 2019 08:00:18 -0700] MAIN LOOP CASE 1 --

SWITCH CRAWL OR NO CURRENT CRAWL

[482 Sun, 25 Aug 2019 08:00:18 -0700] Crawl time has

changed!

[481 Sun, 25 Aug 2019 08:00:18 -0700] End Name Server Check

[480 Sun, 25 Aug 2019 08:00:18 -0700] Minimum fetch loop

time is: 5 seconds

[479 Sun, 25 Aug 2019 08:00:18 -0700] Crawl Time Changing to

0 -- No Crawl

[478 Sun, 25 Aug 2019 08:00:18 -0700] Start of response

was:a:6:{s:1:"a";i:1;s:1:"b";i:0;s:2:"dv";s:4484:"YTo3OntpOj

A7YTo3OntzOjI6IklEIjtzOjE6IjEiO3M6NDoiTkFNRSI7czo2OiJEUlVQQU

wiO3M6ODoiUFJJT1JJVFkiO3M6MToiMCI7czo5OiJTSUdOQVRVUkUiO3M6MT

I1OiIvaHRtbC9oZWFkLypbY29udGFpbnMoQGhyZWYsICcvc2l0ZXMvYWxsL3

RoZW1lcycpIG9yIGNvbn

Hi,

Find out where Xampp stores the PHP error logs and see if there are any errors in it.

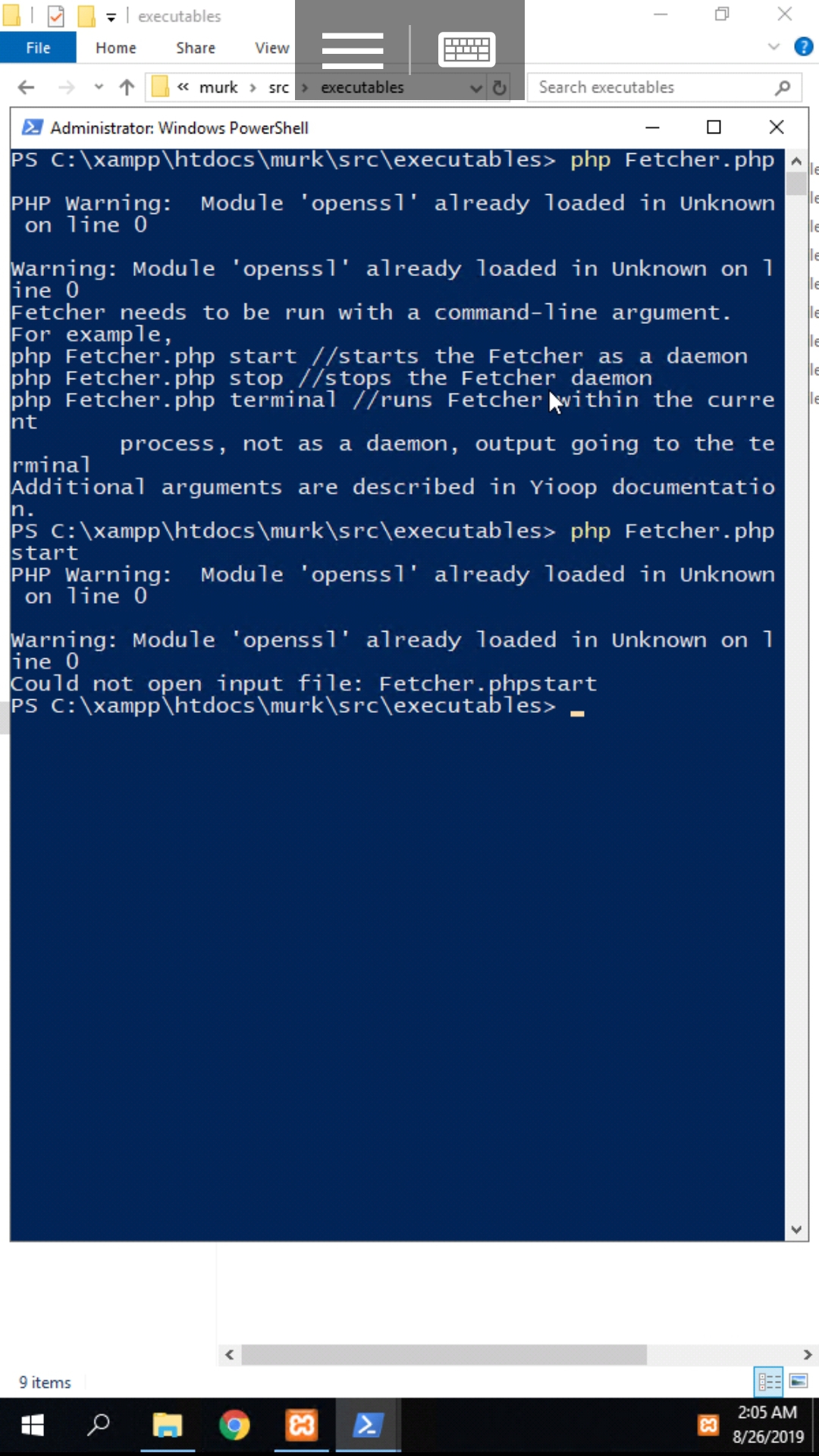

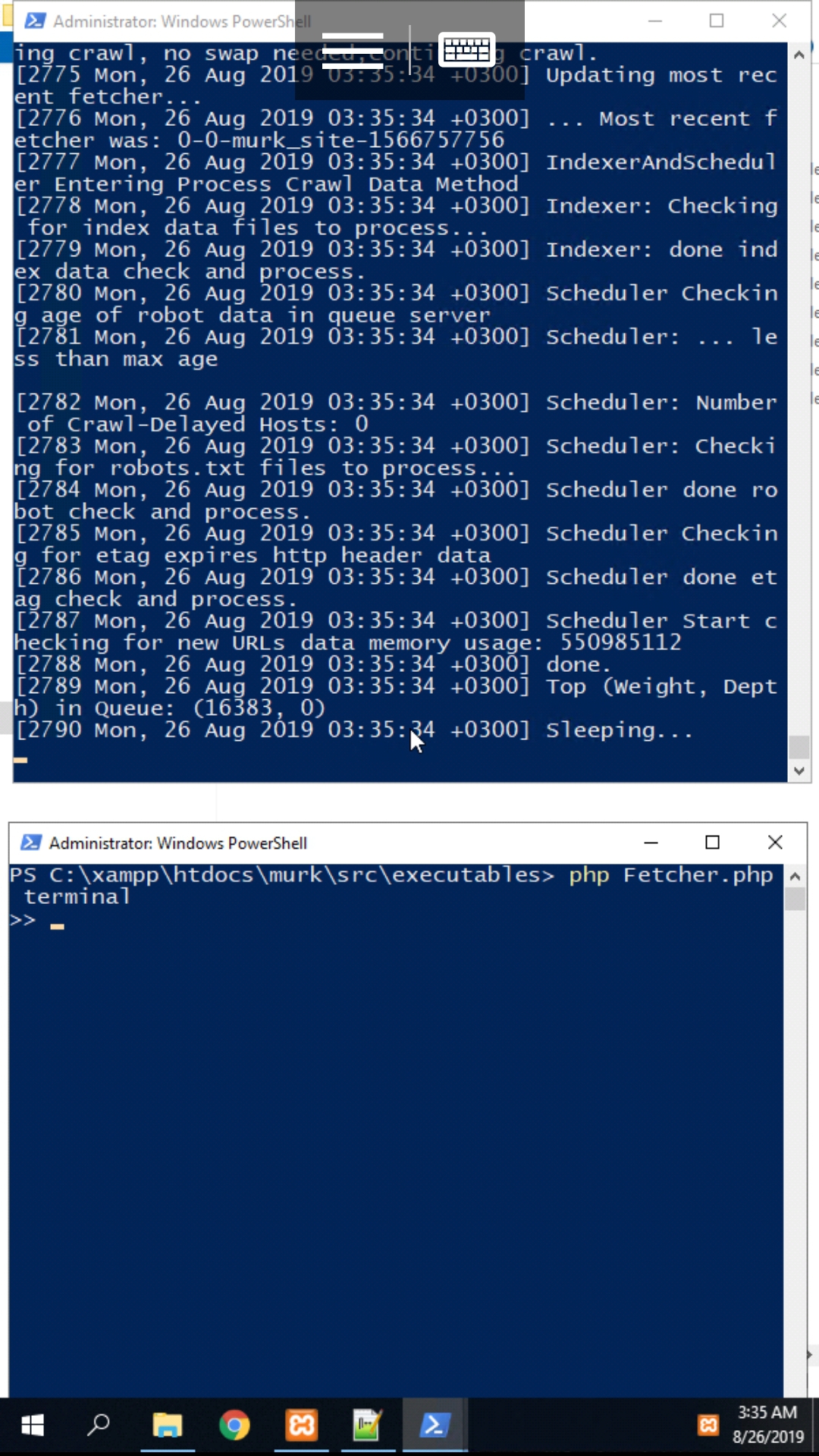

The debugging you were doing in your video seemed reasonable. A crawl is stopped when both Scheduler and Indexer write some file, the fact that both weren't succeeding in doing this suggests either the indexer crashed or some kind of failure in finding / writing the proper folder. The same sort of failure could explain why you aren't seeing any log files. Maybe try to do a crawl from the command line and tell me what happens. That is, turn off the QueueServer and Fetchers under Manage Machines. Open a command or power shell, cd into src/executables, type:

php QueueServer.php terminal

open a second shell, cd to src/executables, type:

php Fetcher.php terminal

make sure under Configure you error reporting is checked. Start a crawl. You only have to start a crawl once. Wait and see if there are any error messages and wait until it has crawled a couple pages. In your case, wikipedia.org. Click stop crawl. Wait till index appears. When crawling from the command line, both scheduler and indexer are in the same process, so it is a little simpler. If it is still stuck you can drop me a line at chris@pollett.org and maybe we can arrange a skype chat.

Best,

Chris

(Edited: 2019-08-25) Hi,

Find out where Xampp stores the PHP error logs and see if there are any errors in it.

The debugging you were doing in your video seemed reasonable. A crawl is stopped when both Scheduler and Indexer write some file, the fact that both weren't succeeding in doing this suggests either the indexer crashed or some kind of failure in finding / writing the proper folder. The same sort of failure could explain why you aren't seeing any log files. Maybe try to do a crawl from the command line and tell me what happens. That is, turn off the QueueServer and Fetchers under Manage Machines. Open a command or power shell, cd into src/executables, type:

php QueueServer.php terminal

open a second shell, cd to src/executables, type:

php Fetcher.php terminal

make sure under Configure you error reporting is checked. Start a crawl. You only have to start a crawl once. Wait and see if there are any error messages and wait until it has crawled a couple pages. In your case, wikipedia.org. Click stop crawl. Wait till index appears. When crawling from the command line, both scheduler and indexer are in the same process, so it is a little simpler. If it is still stuck you can drop me a line at chris@pollett.org and maybe we can arrange a skype chat.

Best,

Chris

screenshot captured after openssl comment in php.ini.

screenshot captured after openssl comment in php.ini.

----

By the way, I noticed some problems with writing to the configuration file.Appearance

settings do not change... ((resource:Screenshot_20190826-033537.jpg|Resource Description for Screenshot_20190826-033537.jpg))

when Yes it all started from the command line, on the site in the administrative panel, I clicked to stop searching, then it stopped!

when Yes it all started from the command line, on the site in the administrative panel, I clicked to stop searching, then it stopped!

2019-09-13

I recently discovered a bug that was introduced in version 6 when I improved index compression. When a crawl is stopped it was trying to unlink a file with an open file handle in IndexShard. Linux blissfully ignores this and unlinks the file, so stuff works. Windows was complaining on the other hand. I have (Sep 19) added code to close the file handle and it is in the git repository and will be fixed in the next version of Yioop.

(Edited: 2019-09-13) I recently discovered a bug that was introduced in version 6 when I improved index compression. When a crawl is stopped it was trying to unlink a file with an open file handle in IndexShard. Linux blissfully ignores this and unlinks the file, so stuff works. Windows was complaining on the other hand. I have (Sep 19) added code to close the file handle and it is in the git repository and will be fixed in the next version of Yioop.

(c) 2024 Yioop - PHP Search Engine